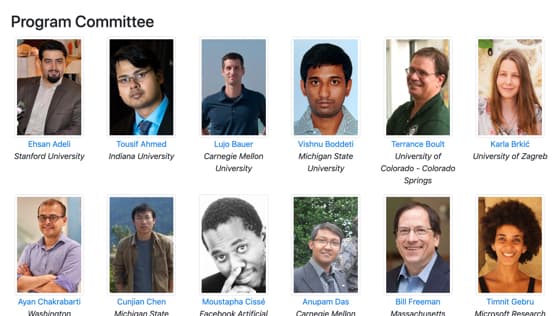

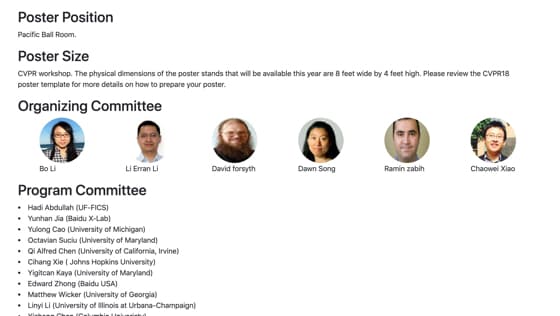

Learning in Real-World Computer Vision

Systems and Online Challenges (AML-CV)

Workshop at CVPR 2021

As computer vision models are being increasingly deployed in the real world, including applications that require safety considerations such as self-driving vehicles, it is imperative that these models are robust and secure even when subject to adversarial attacks.

This workshop will focus on recent research and future directions for security problems in real-world machine learning and computer vision systems. We aim to bring together experts from the computer vision, security, and robust learning communities in an attempt to highlight recent work in this area as well as to clarify the foundations of secure machine learning. We seek to come to a consensus on a rigorous framework to formulate adversarial machine learning problems in computer vision, characterize the properties that ensure the security of perceptual models, and evaluate the consequences under various adversarial models. Finally, we hope to chart out important directions for future work and cross-community collaborations, including computer vision, machine learning, security, and multimedia communities.

The workshop consists of invited talks from the experts in this area, research paper submissions, and a large-scale online competition on adversarial attacks and defenses against real-world systems. In particular, the competition includes two tracks, which cover two novel topics as (1) Adversarial attacks on ML defense models , and (2) Unrestricted adversarial attacks on ImageNet

Paper submission deadline

Paper submission deadline Notification to authors

Notification to authors Camera ready deadline

Camera ready deadline

Registration opens

Registration opens

Registration deadline

Registration deadline

Challenge award notification

Challenge award notification

Submission starts

Submission starts

Submission deadline

Submission deadline

CVPR conference

CVPR conference

Registration opens

Registration opens

Submission starts

Submission starts

Registration deadline

Registration deadline

Submission deadline

Submission deadline

Challenge award notification

Challenge award notification

CVPR conference

CVPR conferenceTopics

Topics include but are not limited to:Adversarial Attacks on ML Defense Models

Most of the machine learning classifiers nowadays are vulnerable to adversarial examples, an emerging topic that has been studied widely in recent years. A large number of adversarial defense methods have been proposed to mitigate the threats of adversarial examples. However, some of them can be broken with more powerful or adaptive attacks, making it very difficult to judge and evaluate the effectiveness of the current defenses and future defenses. Without a thorough and correct robustness evaluation of the defenses, the progress on this field will be limited.

To accelerate the research on reliable evaluations of adversarial robustness of the current defense models in image classification, we organize this competition with the purpose of motivating novel attack algorithms to evaluate the adversarial robustness more effectively and reliably. The participants are encouraged to develop strong white-box attack algorithms to find the worst-case robustness of various models.

This competition will be conducted on an adversarial robustness evaluation platform --- ARES (https://github.com/thu-ml/ares).

MoreUnrestricted Adversarial Attacks on ImageNet

Deep neural network has achieved the most advanced performance in various visual recognition problems. Despite its great success, the security of deep models have also caused many concerns in the industry. For example, deep neural networks are vulnerable to small and imperceptible perturbations on the inputs (these inputs are also called adversarial examples). In addition to the small and imperceptible perturbations, in the actual scene, more threats to the deep model come from the unrestricted adversarial examples, that is, the attacker makes large and visible modifications on the image, which causes the model classifying mistakenly, but does not affect the normal observation in human perspective. Unrestricted adversarial attack is a popular direction in the field of adversarial attack in recent years. We hope that this competition can lead competitors not only understand and explore the scene of unrestricted adversarial attack on ImageNet, but also further refine and summarize some innovative and effective schemes of unrestricted attack, so as to promote the development of the field of adversarial attack academically.

More